To create the point cloud, connect the deep input of the DeepToPoint node to the deep image. You can use the points for position reference. The DepthToPoints node is used to create a point cloud using the deep data. Note:You can use the DeepSample node to know the precise depth values and then enter them in the A, B, C, and D fields. You can use the mix slider to blend between the color corrected output and the original image. The value in A field indicates the depth where the color correction will start, values in the B and C fields indicate the range where the color correction will be at full effect, and value in the D field indicates the depth where the color-correction effect stops. Select the limit_z check box and then adjust the trapezoid curve the values in the A, B, C, and D fields will change. The options in this tab are used to control the depth range where the effect of the color-correction will be visible. The DeepColorCorrect node is the ColorCorrect node for deep images with an additional Masking tab. The tiled OpenEXR files are not supported by this node. It is used to render all upstream deep nodes to OpenEXR 2.0 format. The DeepWrite is the Write node for deep images. It converts a deep image to a regular 2D image. The DeepToImage node is used to flatten a deep image. Move the widget in the Viewer panel to display the sample information in the DeepSample node properties panel. When you add a DeepSample node in the Node Graph panel, a pos widget will be displayed in the Viewer panel. The DeepSample node is used to sample a pixel in the deep image.

The DeepReformat node is the Reformat node for deep images. If you want to limit the z translate and z scale effects to the non-black areas of the mask, connect an image to the mask input of the DeepTransform node. The zscale parameter is used to scale the z depth values of the samples. You can use the x, y, and z fields corresponding to the translate parameter are used to move the deep data. The DeepTransform node is used to re-position the deep data along the x, y, and z axes. As a result, samples in the B input will be removed or fade out that are occluded by the samples in the A input. If you select holdout from the operation drop-down, the samples from the B input will be hold out by the samples in the A input. When this check box is selected, all the samples that have an alpha value of 1 and are behind other samples will be discarded. The drop hidden samples check box will be only available, if you select combine from the operation drop-down. As a result, Nuke combines samples from the A and B inputs. By default, combine is selected in this drop-down. The options in the operation drop-down in the DeepMerge tab of the DeepMerge node properties panel are used to specify the method for combining the deep images. You can use these inputs to connect the deep images you want to merge. The DeepMerge node is used to merge multiple deep images. The parameters in the DeepRead node properties panel are similar to that of the Read node. Note: The tiled OpenEXR 2.0 files are not supported by Nuke.

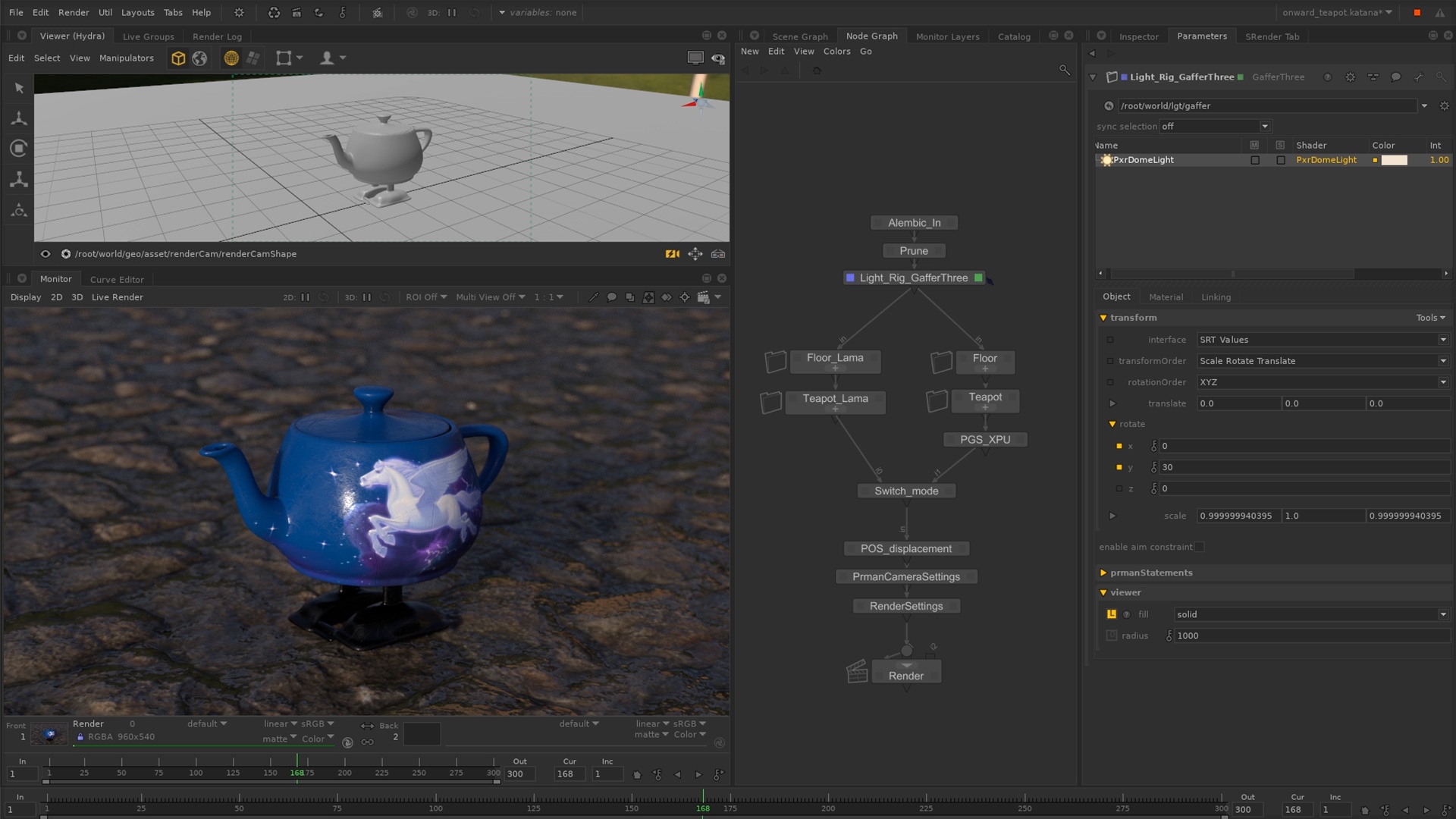

In Nuke, you can read deep images in two formats: DTEX (Generated by Pixar's PhotoRealistic Renderman Pro Server) and Scanline OpenEXR 2.0. The DeepRead node is used to read the deep images to the script. Each sample contains per pixel information about color, opacity, and depth. Deep images contain multiple samples per pixel at various depths. You need to render the background once and then you can move the foreground objects at different places and depth in the scene. Also, it reduces the need to re-render the image. It helps in eliminating artifacts around the edges of the objects. Deep compositing is a way to composite images with additional depth data. Nuke's powerful deep compositing tools set gives you ability to create high quality digital images faster.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed